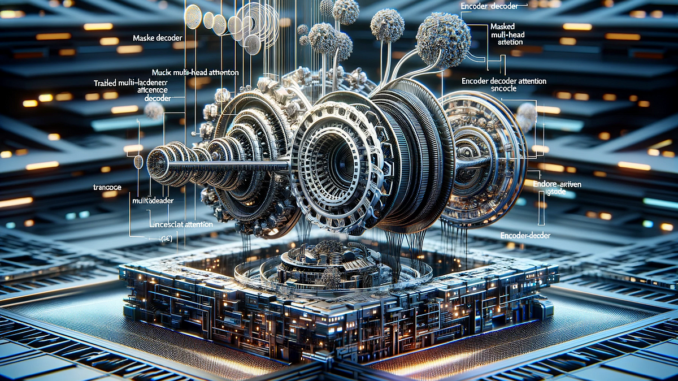

Exploring the Transformer’s Decoder Architecture: Masked Multi-Head Attention, Encoder-Decoder Attention, and Practical Implementation

This post was co-authored with Rafael Nardi.

In this article, we delve into the decoder component of the transformer architecture, focusing on its differences and similarities with the encoder. The decoder’s unique feature is its loop-like, iterative nature, which contrasts with the encoder’s linear processing. Central to the decoder are two modified forms of the attention mechanism: masked multi-head attention and encoder-decoder multi-head attention.

The masked multi-head attention in the decoder ensures sequential processing of tokens, a method that prevents each generated token from being influenced by subsequent tokens. This masking is important for maintaining the order and coherence of the generated data. The interaction between the decoder’s output (from masked attention) and the encoder’s output is highlighted in the encoder-decoder attention. This last step gives the input context into the decoder’s process.

We will also demonstrate how these concepts are implemented using Python and NumPy. We have created a simple example of translating a sentence from English to Portuguese. This practical approach will help illustrate the inner workings of the decoder in a transformer model and provide a clearer understanding of its role in Large Language Models (LLMs).

As always, the code is available on our GitHub.

After describing the inner workings of the encoder in transformer architecture in our previous article, we shall see the next segment, the decoder part. When comparing the two parts of the transformer we believe it is instructive to emphasize the main similarities and differences. The attention mechanism is the core of both. Specifically, it occurs in two places at the decoder. They both have important modifications compared to the simplest version present at the encoder: masked multi-head attention and encoder-decoder multi-head attention. Talking about differences, we point out the…

Be the first to comment