Modeling time series data and forecasting it are complex topics. There are many techniques that could be used to improve model performance for a forecasting job. We will discuss here a technique that may improve the way forecasting models absorb and utilize time features, and generalize from them. The main focus will be the creation of the seasonal features that feed the time series forecasting model in training — there are easy gains to be made here if you include the Fourier transform in the feature creation process.

This article assumes you are familiar with the basic aspects of time series forecasting — we will not discuss this topic in general, but only a refinement of one aspect of it. For an introduction to time series forecasting, see the Kaggle course on this topic — the technique discussed here builds on top of their lesson on seasonality.

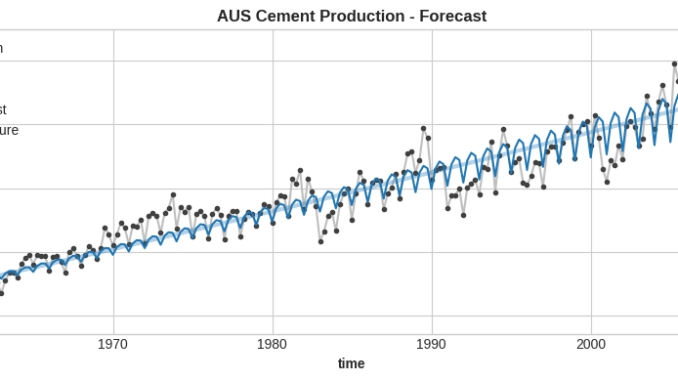

Consider the Quarterly Australian Portland Cement production dataset, showing the total quarterly production of Portland cement in Australia (in millions of tonnes) from 1956:Q1 to 2014:Q1.

df = pd.read_csv('Quarterly_Australian_Portland_Cement_production_776_10.csv', usecols=['time', 'value'])

# convert time from year float to a proper datetime format

df['time'] = df['time'].apply(lambda x: str(int(x)) + '-' + str(int(1 + 12 * (x % 1))).rjust(2, '0'))

df['time'] = pd.to_datetime(df['time'])

df = df.set_index('time').to_period()

df.rename(columns={'value': 'production'}, inplace=True)

production

time

1956Q1 0.465

1956Q2 0.532

1956Q3 0.561

1956Q4 0.570

1957Q1 0.529

... ...

2013Q1 2.049

2013Q2 2.528

2013Q3 2.637

2013Q4 2.565

2014Q1 2.229[233 rows x 1 columns]

This is time series data with a trend, seasonal components, and other attributes. The observations are made quarterly, spanning several decades. Let’s take a look at the seasonal components first, using the periodogram plot (all code is included in the companion notebook, linked at the end).

The periodogram shows the power density of spectral components (seasonal components). The strongest component is the one with a period equal to 4 quarters, or 1 year. This confirms the visual impression that the strongest up-and-down variations in the graph happen about 10 times per decade. There is also a component with a period of 2 quarters — that’s the same seasonal phenomenon, and it simply means the 4-quarter periodicity is not a simple sine wave, but has a more complex shape. We will ignore components with periods higher than 4, for simplicity.

If you try to fit a model to this data, perhaps in order to forecast the cement production for times beyond the end of the dataset, it would be a good idea to capture this yearly seasonality in a feature or set of features, and provide those to the model in training. Let’s see an example.

Seasonal components can be modeled in a number of different ways. Often, they are represented as time dummies, or as sine-cosine pairs. These are synthetic features with a period equal to the seasonality you’re trying to model, or a submultiple of it.

Yearly time dummies:

seasonal_year = DeterministicProcess(index=df.index, constant=False, seasonal=True).in_sample()

print(seasonal_year)

s(1,4) s(2,4) s(3,4) s(4,4)

time

1956Q1 1.0 0.0 0.0 0.0

1956Q2 0.0 1.0 0.0 0.0

1956Q3 0.0 0.0 1.0 0.0

1956Q4 0.0 0.0 0.0 1.0

1957Q1 1.0 0.0 0.0 0.0

... ... ... ... ...

2013Q1 1.0 0.0 0.0 0.0

2013Q2 0.0 1.0 0.0 0.0

2013Q3 0.0 0.0 1.0 0.0

2013Q4 0.0 0.0 0.0 1.0

2014Q1 1.0 0.0 0.0 0.0[233 rows x 4 columns]

Yearly sine-cosine pairs:

cfr = CalendarFourier(freq='Y', order=2)

seasonal_year_trig = DeterministicProcess(index=df.index, seasonal=False, additional_terms=[cfr]).in_sample()

with pd.option_context('display.max_columns', None, 'display.expand_frame_repr', False):

print(seasonal_year_trig)

sin(1,freq=A-DEC) cos(1,freq=A-DEC) sin(2,freq=A-DEC) cos(2,freq=A-DEC)

time

1956Q1 0.000000 1.000000 0.000000 1.000000

1956Q2 0.999963 0.008583 0.017166 -0.999853

1956Q3 0.017166 -0.999853 -0.034328 0.999411

1956Q4 -0.999963 -0.008583 0.017166 -0.999853

1957Q1 0.000000 1.000000 0.000000 1.000000

... ... ... ... ...

2013Q1 0.000000 1.000000 0.000000 1.000000

2013Q2 0.999769 0.021516 0.043022 -0.999074

2013Q3 0.025818 -0.999667 -0.051620 0.998667

2013Q4 -0.999917 -0.012910 0.025818 -0.999667

2014Q1 0.000000 1.000000 0.000000 1.000000[233 rows x 4 columns]

Time dummies can capture very complex waveforms of the seasonal phenomenon. On the flip side, if the period is N, then you need N time dummies — so, if the period is very long, you will need a lot of dummy columns, which may not be desirable. For non-penalized models, often just N-1 dummies are used — one is dropped to avoid collinearity issues (we will ignore that here).

Sine/cosine pairs can model arbitrarily long time periods. Each pair will model a pure sine waveform with some initial phase. As the waveform of the seasonal feature becomes more complex, you will need to add more pairs (increase the order of the output from CalendarFourier).

(Side note: we use a sine/cosine pair for each period we want to model. What we really want is just one column of A*sin(2*pi*t/T + phi) where A is the weight assigned by the model to the column, t is the time index of the series, and T is the component period. But the model cannot adjust the initial phase phi while fitting the data — the sine values are pre-computed. Luckily, the formula above is equivalent to: A1*sin(2*pi*t/T) + A2*cos(2*pi*t/T) and the model only needs to find the weights A1 and A2.)

TLDR: What these two techniques have in common is that they represent seasonality via a set of repetitive features, with values that cycle as often as once per the time period being modeled (time dummies, and the base sine/cosine pair), or several times per period (higher order sine/cosine). And each one of these features has values varying within a fixed interval: from 0 to 1, or from -1 to 1. These are all the features we have to model seasonality here.

Let’s see what happens when we fit a linear model on such seasonal features. But first, we need to add trend features to the features collection used to train the model.

Let’s create trend features and then join them with the seasonal_year time dummies generated above:

trend_order = 2

trend_year = DeterministicProcess(index=df.index, constant=True, order=trend_order).in_sample()

X = trend_year.copy()

X = X.join(seasonal_year)

const trend trend_squared s(1,4) s(2,4) s(3,4) s(4,4)

time

1956Q1 1.0 1.0 1.0 1.0 0.0 0.0 0.0

1956Q2 1.0 2.0 4.0 0.0 1.0 0.0 0.0

1956Q3 1.0 3.0 9.0 0.0 0.0 1.0 0.0

1956Q4 1.0 4.0 16.0 0.0 0.0 0.0 1.0

1957Q1 1.0 5.0 25.0 1.0 0.0 0.0 0.0

... ... ... ... ... ... ... ...

2013Q1 1.0 229.0 52441.0 1.0 0.0 0.0 0.0

2013Q2 1.0 230.0 52900.0 0.0 1.0 0.0 0.0

2013Q3 1.0 231.0 53361.0 0.0 0.0 1.0 0.0

2013Q4 1.0 232.0 53824.0 0.0 0.0 0.0 1.0

2014Q1 1.0 233.0 54289.0 1.0 0.0 0.0 0.0[233 rows x 7 columns]

This is the X dataframe (features) that will be used to train/validate the model. We’re modeling the data with quadratic trend features, plus the 4 time dummies needed for the yearly seasonality. The y dataframe (target) will be just the cement production numbers.

Let’s carve a validation set out of the data, containing the year 2010 observations. We will fit a linear model on the X features shown above and the y target represented by cement production (the test portion), and then we will evaluate model performance in validation. We will also do all of the above with a trend-only model that will only fit the trend features but ignores seasonality.

def do_forecast(X, index_train, index_test, trend_order):

X_train = X.loc[index_train]

X_test = X.loc[index_test]y_train = df['production'].loc[index_train]

y_test = df['production'].loc[index_test]

model = LinearRegression(fit_intercept=False)

_ = model.fit(X_train, y_train)

y_fore = pd.Series(model.predict(X_test), index=index_test)

y_past = pd.Series(model.predict(X_train), index=index_train)

trend_columns = X_train.columns.to_list()[0 : trend_order + 1]

model_trend = LinearRegression(fit_intercept=False)

_ = model_trend.fit(X_train[trend_columns], y_train)

y_trend_fore = pd.Series(model_trend.predict(X_test[trend_columns]), index=index_test)

y_trend_past = pd.Series(model_trend.predict(X_train[trend_columns]), index=index_train)

RMSLE = mean_squared_log_error(y_test, y_fore, squared=False)

print(f'RMSLE: {RMSLE}')

ax = df.plot(**plot_params, title='AUS Cement Production - Forecast')

ax = y_past.plot(color='C0', label='Backcast')

ax = y_fore.plot(color='C3', label='Forecast')

ax = y_trend_past.plot(ax=ax, color='C0', linewidth=3, alpha=0.333, label='Trend Past')

ax = y_trend_fore.plot(ax=ax, color='C3', linewidth=3, alpha=0.333, label='Trend Future')

_ = ax.legend()

do_forecast(X, index_train, index_test, trend_order)

RMSLE: 0.03846449744356434

Blue is train, red is validation. The full model is the sharp, thin line. The trend-only model is the wide, fuzzy line.

This is not bad, but there is one glaring issue: the model has learned a constant-amplitude yearly seasonality. Even though the yearly variation actually increases in time, the model can only stick to fixed-amplitude variations. Obviously, this is because we gave the model only fixed-amplitude seasonal features, and there’s nothing else in the features (target lags, etc) to help it overcome this issue.

Let’s dig deeper into the signal (the data) to see if there’s anything there that could help with the fixed-amplitude issue.

The periodogram will highlight all spectral components in the signal (all seasonal components in the data), and will provide an overview of their overall strength, but it’s an aggregate, a sum over the whole time interval. It says nothing about how the amplitude of each seasonal component may vary in time across the dataset.

To capture that variation, you have to use the Fourier spectrogram instead. It’s like the periodogram, but performed repeatedly over many time windows across the entire data set. Both periodogram and spectrogram are available as methods in the scipy library.

Let’s plot the spectrogram for the seasonal components with periods of 2 and 4 quarters, mentioned above. As always, the full code is in the companion notebook linked at the end.

spectrum = compute_spectrum(df['production'], 4, 0.1)

plot_spectrogram(spectrum, figsize_x=10)

What this diagram shows is the amplitude, the “strength” of the 2-quarter and 4-quarter components over time. They are pretty weak initially, but become very strong around 2010, which matches the variations you can see in the data set plot at the top of this article.

What if, instead of feeding the model fixed-amplitude seasonal features, we adjust the amplitude of these features in time, matching what the spectrogram tells us? Would the final model perform better in validation?

Let’s pick the 4-quarter seasonal component. We could fit a linear model (called the envelope model) on the order=2 trend of this component, on the train data (model.fit()), and extend that trend into validation / testing (model.predict()):

envelope_features = DeterministicProcess(index=X.index, constant=True, order=2).in_sample()spec4_train = compute_spectrum(df['production'].loc[index_train], max_period=4)

spec4_train

spec4_model = LinearRegression()

spec4_model.fit(envelope_features.loc[spec4_train.index], spec4_train['4.0'])

spec4_regress = pd.Series(spec4_model.predict(envelope_features), index=X.index)

ax = spec4_train['4.0'].plot(label='component envelope', color='gray')

spec4_regress.loc[spec4_train.index].plot(ax=ax, color='C0', label='envelope regression: past')

spec4_regress.loc[index_test].plot(ax=ax, color='C3', label='envelope regression: future')

_ = ax.legend()

The blue line is the strength of the variation of the 4-quarter component in the train data, fitted as a quadratic trend (order=2). The red line is the same thing, extended (predicted) over the validation data.

We have modeled the variation in time of the 4-quarter seasonal component. We can take the output from the envelope model, and multiply it by the time dummies corresponding to the 4-quarter seasonal component:

spec4_regress = spec4_regress / spec4_regress.mean()season_columns = ['s(1,4)', 's(2,4)', 's(3,4)', 's(4,4)']

for c in season_columns:

X[c] = X[c] * spec4_regress

print(X)

const trend trend_squared s(1,4) s(2,4) s(3,4) s(4,4)

time

1956Q1 1.0 1.0 1.0 0.179989 0.000000 0.000000 0.000000

1956Q2 1.0 2.0 4.0 0.000000 0.181109 0.000000 0.000000

1956Q3 1.0 3.0 9.0 0.000000 0.000000 0.182306 0.000000

1956Q4 1.0 4.0 16.0 0.000000 0.000000 0.000000 0.183581

1957Q1 1.0 5.0 25.0 0.184932 0.000000 0.000000 0.000000

... ... ... ... ... ... ... ...

2013Q1 1.0 229.0 52441.0 2.434701 0.000000 0.000000 0.000000

2013Q2 1.0 230.0 52900.0 0.000000 2.453436 0.000000 0.000000

2013Q3 1.0 231.0 53361.0 0.000000 0.000000 2.472249 0.000000

2013Q4 1.0 232.0 53824.0 0.000000 0.000000 0.000000 2.491139

2014Q1 1.0 233.0 54289.0 2.510106 0.000000 0.000000 0.000000[233 rows x 7 columns]

The 4 time dummies are not either 0 or 1 anymore. They’ve been multiplied by the component envelope, and now their amplitude varies in time, just like the envelope.

Let’s train the main model again, now using the modified time dummies.

do_forecast(X, index_train, index_test, trend_order)

RMSLE: 0.02546321729737165

We’re not aiming for a perfect fit here, since this is just a toy model, but it’s obvious the model performs better, it is more closely following the 4-quarter variations in the target. The performance metric has improved also, by 51%, which is not bad at all.

Improving model performance is a vast topic. The behavior of any model does not depend on a single feature, or on a single technique. However, if you’re looking to extract all you can out of a given model, you should probably feed it meaningful features. Time dummies, or sine/cosine Fourier pairs, are more meaningful when they reflect the variation in time of the seasonality they are modeling.

Adjusting the seasonal component’s envelope via the Fourier transform is particularly effective for linear models. Boosted trees do not benefit as much, but you can still see improvements almost no matter what model you use. If you’re using a hybrid model, where a linear model handles deterministic features (calendar, etc), while a boosted trees model handles more complex features, it is important that the linear model “gets it right”, therefore leaving less work to do for the other model.

It is also important that the envelope models you use to adjust seasonal features are only trained on the train data, and they do not see any testing data while in training, just like any other model. Once you adjust the envelope, the time dummies contain information from the target — they are not purely deterministic features anymore, that can be computed ahead of time over any arbitrary forecast horizon. So at this point information could leak from validation/testing back into training data if you’re not careful.

The data set used in this article is available here under the Public Domain (CC0) license:

The code used in this article can be found here:

A notebook submitted to the Store Sales — Time Series Forecasting competition on Kaggle, using ideas described in this article:

A GitHub repo containing the development version of the notebook submitted to Kaggle is here:

All images and code used in this article are created by the author.

Be the first to comment