Power BI Dataflows are a crucial component of the Power BI ecosystem that can simplify data preparation and enable easier scalability compared to regular datasets. There are several ways they can be used and with proper knowledge, they can be set up quickly and provide greater control over data pipelines. In this blog post, we’ll explore what Power BI Dataflows are and provide a comprehensive guide on how to use them effectively.

Sections

Understanding Power BI Dataflows

Power BI Dataflows act as a self-service data preparation layer within the Power BI ecosystem. They enable users to ingest, transform, and load data from various sources, providing a structured and clean foundation for building insightful reports and dashboards. They can be thought of as centralized datasets as they carry many of the exact options and interfaces that come with datasets.

Dataflows vs Datasets

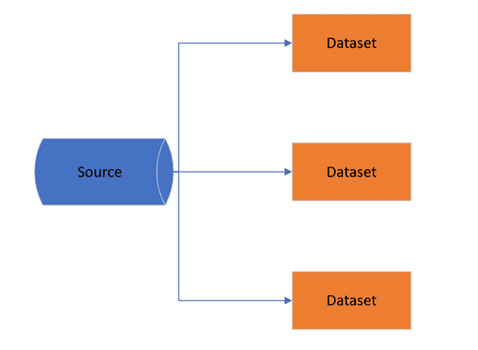

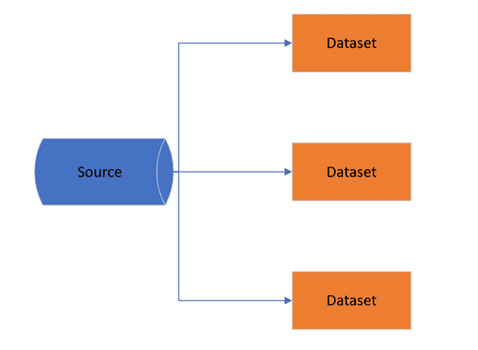

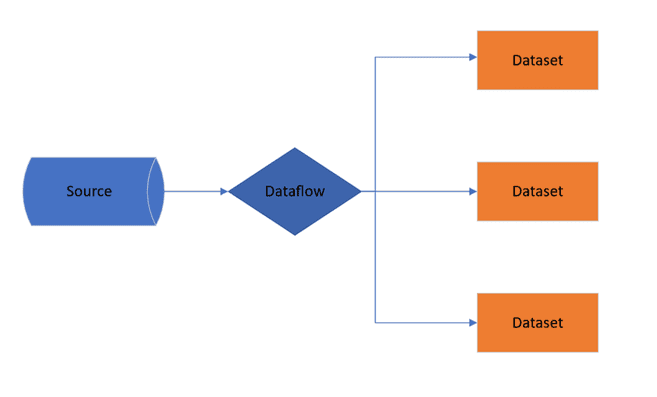

In most cases, a dataset can do everything a dataflow can, so why not just use datasets? Dataflows allow us to store transformations within the data further upstream. This means we can have any additional logic attached to this one source that other datasets will pull from instead of having to copy that logic across to multiple datasets.

Having this centralized means that instead of our reports hitting a source multiple times and refreshing off it, we are only using a single thread through our dataflow. This also simplifies the creation of new reports that need to be pulled from a main source within an organization.

What we have to do when creating a new report is pull the necessary tables that already have our applied steps. This removes a lot of the overhead for both tidying up the datasets as well as on the data.

Datasets

Dataflows

Getting Started

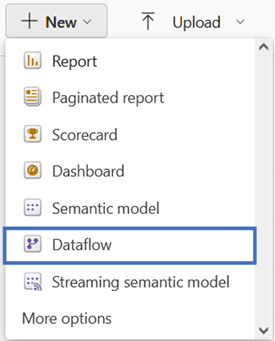

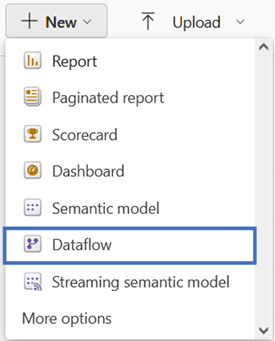

- Accessing Power BI Dataflows:

- To start using Dataflows, navigate to the Power BI service.

- Open the Power BI workspace where you want to create the Dataflow.

- Select the new dropdown and dataflows

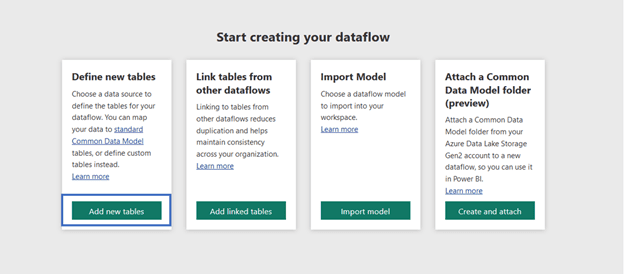

2. Creating a Blank Dataflow:

- The next part of the process is determined by how you want the dataflow to be set up, in this case we will be starting fresh, but dataflows allow the ability to connect from other workspaces or import from previous dataflows.

- Select “Define new tables”

3. Setting up the data source:

- Dataflows offer the same connection types found within datasets, so any existing data source should be easily connected to in the same way.

- If there is a gateway connection available, given the correct configuration it will automatically populate that gateway in the “Connection” field.

4. Transforming Data:

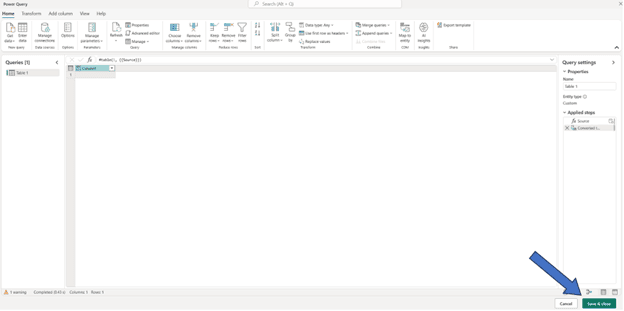

- Dataflows allow for the same power query transformations that can be made in a dataset, so it is very easy to transfer the knowledge over to this tool.

- Once a table from a source has been added into power query additional sources or additional tables can also be added in a similar method to datasets, by getting data, duplicating, or entering data.

- After the steps have all been applied and tables completed, we can continue with “Save & close” at the bottom of the page

5. Dataflow created:

- Now that the transformations have been made, we have some options in the use of the dataflow.

- Incremental refresh – Can be implemented to only refresh new data within the specified period. This is useful if there are not many changes in historical data and helps to cut down on the refresh times/usage.

- Scheduled refresh – Same as on datasets can configure set times to have the dataflow refresh. With the way the datasets will be pulling from this as their source it is recommended that the refresh times are triggered before datasets so that newer data flows into them.

Easy Setup

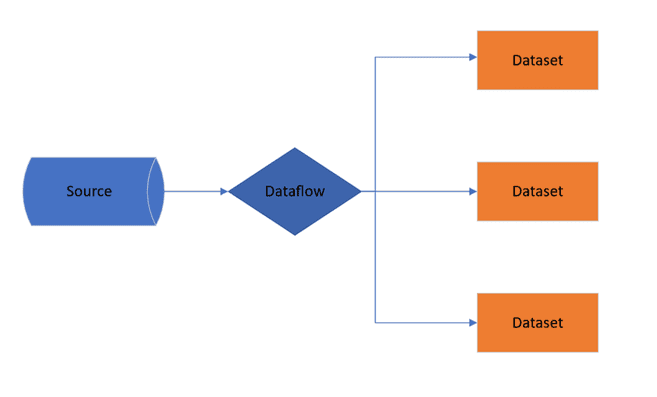

An excellent feature within Power BI is the ability to easily copy over a transformed table from a Power BI dataset into a dataflow.

Since dataflows use the same Power Query as datasets, we can copy and paste tables from open report files within the Power Query editor over to the dataflows. All the applied steps of the table will automatically be brought over and if it is connected to the previous source, you will see all the same data.

Dataset

Dataflow

This feature makes it extremely easy to set up dataflows based on previously constructed datasets and get started using them. This method can also be used between dataflows or of course datasets.

Limitations of Dataflows

There are some small limitations with dataflows, many of which frequent users of datasets will have no problems with. The only real downside that may be an annoyance to those with multiple users in a workspace is the limited visibility with ownership. Only owners of dataflows can directly see the tables and the options associated with them which makes it difficult to know the contents of a dataflow if not in direct ownership of it.

Even with all granted permissions the view of dataflows is very limited even compared to datasets/reports in workspaces. The only real workaround outside of passing off ownership to have a look into the query editor is to export the dataflow out.

Conclusion

Power BI Dataflows have emerged as a cornerstone in the data preparation journey, providing organizations with a scalable, efficient, and collaborative approach to data management. By embracing the power of Dataflows, organizations can have more control over their data while also making it easier than ever to begin working with them.

Be the first to comment