In terms of Machine Learning timelines, Random Forests (RFs), introduced in the seminal paper of Breimann ([1]), are ancient. Despite their age, they keep impressing with their performance and are a topic of active research. The goal of this article is to highlight what a versatile toolbox Random Forest methods have become, focussing on Generalized Random Forest (GRF) and Distributional Random Forest (DRF).

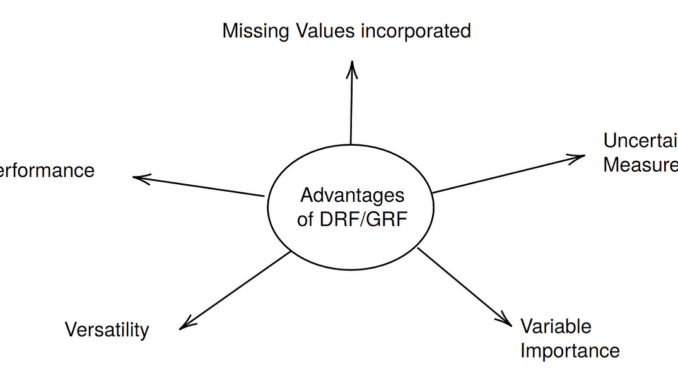

In short, the main idea underlying both methods is that the weights implicitly produced by RF can be used to estimate targets other than the conditional expectation. The idea of GRF is to use a Random Forest with a splitting criterion that is adapted to the target one has in mind (e.g., conditional mean, conditional quantiles, or the conditional treatment effect). The idea of DRF is to adapt the splitting criterion such that the whole conditional distribution can be estimated. From this object, many different targets can then be derived in a second step. In fact, I mostly talk about DRF in this article, as I am more familiar with this method and it is somewhat more streamlined (only one forest has to be fitted for a wide range of targets). However, all the advantages, indicated in the figure above, also apply to GRF and in fact, the DRF package in R is built upon the professional implementation of GRF. Moreover, the fact that the splitting criterion of GRF forests is adapted to the target means it can have better performance than DRF. This is particularly true for binary Y, where probability_forests() should be used. So, even though I talk mostly about DRF, GRF should be kept in mind throughout this article.

The goal of this article is to provide an overview with links to deeper reading in the corresponding sections. We will go through each of the points in the above figure clock-wise, reference the corresponding articles, and highlight it with a little example. I first quickly summarize the most important links to further reading below:

Versatility/Performance: Medium Article and Original Papers (DRF/GRF)

Missing Values Incorporated: Medium Article

Uncertainty Measures: Medium Article

Variable Importance: Medium Article

The Example

We take X_1, X_2, X_4, …, X_10 independently uniform between (-1,1) and create dependence between X_1 and X_3 by taking X_3=X_1 + uniform error. Then we simulate Y as

## Load packages and functions needed

library(drf)

library(mice)

source("drfnew_v2.R")

## The function drfnew_v2.R is available below, or on

## https://github.com/JeffNaef/drfupdate## Set parameters

set.seed(10)

n<-1000

##Simulate Data that experiences both a mean as well as sd shift

# Simulate from X

x1 <- runif(n,-1,1)

x2 <- runif(n,-1,1)

x3 <- x1+ runif(n,-1,1)

X0 <- matrix(runif(7*n,-1,1), nrow=n, ncol=7)

Xfull <- cbind(x1,x2, x3, X0)

colnames(Xfull)<-paste0("X", 1:10)

# Simulate dependent variable Y

Y <- as.matrix(rnorm(n,mean = 0.8*(x1 > 0), sd = 1 + 1*(x2 > 0)))

##Also add MAR missing values using ampute from the mice package

X<-ampute(Xfull)$amp

head(cbind(Y,X))

Y X1 X2 X3 X4 X5

1 -3.0327466 -0.4689827 0.06161759 0.27462737 NA -0.624463079

2 1.2582824 -0.2557522 0.36972181 NA -0.04100963 0.009518047

3 -0.8781940 0.1457067 -0.23343321 NA -0.64519687 -0.945426305

4 3.1595623 0.8164156 0.90997600 0.69184618 -0.20573331 -0.007404298

5 1.1176545 -0.5966361 NA -1.21276055 0.62845399 0.894703422

6 -0.4377359 0.7967794 -0.92179989 -0.03863182 0.88271415 -0.237635732

X6 X7 X8 X9 X10

1 -0.9290009 0.5401628 0.39735433 -0.7434697 0.8807558

2 -0.2885927 0.3805251 -0.09051334 -0.7446170 0.9935311

3 -0.5022541 0.3009541 0.29424395 0.5554647 -0.5341800

4 0.7583608 -0.8506881 0.22758566 -0.1596993 -0.7161976

5 -0.3640422 0.8051613 -0.46714833 0.4318039 -0.8674060

6 -0.3577590 -0.7341207 0.85504668 -0.6933918 0.4656891

Notice that with the function ampute from the mice package, we put Missing not at Random (MAR) missing values on X to highlight the ability of GRF/DRF to deal with missing values. Moreover, in the above process only X_1 and X_2 are relevant for predicting Y, all other variables are “noise” variables. Such a “sparse” setting might actually be common in real-life datasets.

We now choose a test point for this example that we will use throughout:

x<-matrix(c(0.2, 0.4, runif(8,-1,1)), nrow=1, ncol=10)

print(x)[,1] [,2] [,3] [,4] [,5] [,6] [,7] [,8]

[1,] 0.2 0.4 0.7061058 0.8364877 0.2284314 0.7971179 0.78581 0.5310279

[,9] [,10]

[1,] -0.5067102 0.6918785

Versatility

DRF estimates the conditional distribution P_{Y|X=x} in the form of simple weights:

From these weights, a wide range of targets can be calculated, or they can be used to simulate from the conditional distribution. A good reference for its versatility is the original research article, where a lot of examples were used, as well as the corresponding medium article.

In the example, we first simulate from this distribution:

DRF<-drfCI(X=X, Y=Y, B=50,num.trees=1000, min.node.size = 5)

DRFpred<-predictdrf(DRF, newdata=x)## Sample from P_{Y| X=x}

Yxs<-Y[sample(1:n, size=n, replace = T, DRFpred$weights[1,])]

hist(Yxs, prob=T)

z<-seq(-6,7,by=0.01)

d<-dnorm(z, mean=0.8 * (x[1] > 0), sd=(1+(x[2] > 0)))

lines(z,d, col="darkred" )

The plot shows the approximate draws from the conditional distribution overlaid with the true density in red. We now use this to estimate the conditional expectation and the conditional (0.05, 0.95) quantiles at x:

# Calculate quantile prediction as weighted quantiles from Y

qx <- quantile(Yxs, probs = c(0.05,0.95))# Calculate conditional mean prediction

mux <- mean(Yxs)

# True quantiles

q1<-qnorm(0.05, mean=0.8 * (x[1] > 0), sd=(1+(x[2] > 0)))

q2<-qnorm(0.95, mean=0.8 * (x[1] > 0), sd=(1+(x[2] > 0)))

mu<-0.8 * (x[1] > 0)

hist(Yxs, prob=T)

z<-seq(-6,7,by=0.01)

d<-dnorm(z, mean=0.8 * (x[1] > 0), sd=(1+(x[2] > 0)))

lines(z,d, col="darkred" )

abline(v=q1,col="darkred" )

abline(v=q2, col="darkred" )

abline(v=qx[1], col="darkblue")

abline(v=qx[2], col="darkblue")

abline(v=mu, col="darkred")

abline(v=mux, col="darkblue")

Likewise, many targets can be calculated with GRF, only in this case for each of those two targets a different forest would need to be fit. In particular, regression_forest() for the conditional expectation and quantile_forest() for the quantiles.

What GRF cannot do is deal with multivariate targets, which is possible with DRF as well.

Performance

Despite all the work on powerful new (nonparametric) methods, such as neural nets, tree-based methods are consistently able to beat competitors on tabular data. See e.g., this fascinating paper, or this older paper on the strength of RF in classification.

To be fair, with parameter tuning, boosted tree methods, such as XGboost, often take the lead, at least when it comes to classical prediction (which corresponds to conditional expectation estimation). Nonetheless, the robust performance RF methods tend to have without any tuning is remarkable. Moreover, there has also been work on improving the performance of Random Forests, for example, the hedged Random Forest approach.

Missing Values Incorporated

“Missing incorporated in attributes criterion” (MIA) from this paper is a very simple but very powerful idea that allows tree-based methods to handle missing data. This was implemented in the GRF R package and so it is also available in DRF. The details are also explained in this medium article. As simple as the concept is, it works remarkably well in practice: In the above example, DRF had no trouble handling substantial MAR missingness in the training data X (!)

Uncertainty Measures

As a statistician I don’t just want point estimates (even of a distribution), but also a measure of estimation uncertainty of my parameters (even if the “parameter” is my whole distribution). Turns out that a simple additional subsampling baked into DRF/GRF allows for a principled uncertainty quantification for large sample sizes. The theory behind this in the case of DRF is derived in this research article, but I also explain it in this medium article. GRF has all the theory in the original paper.

We adapt this for the above example:

# Calculate uncertainty

alpha<-0.05

B<-length(DRFpred$weightsb)

qxb<-matrix(NaN, nrow=B, ncol=2)

muxb<-matrix(NaN, nrow=B, ncol=1)

for (b in 1:B){

Yxsb<-Y[sample(1:n, size=n, replace = T, DRFpred$weightsb[[b]][1,])]

qxb[b,] <- quantile(Yxsb, probs = c(0.05,0.95))

muxb[b] <- mean(Yxsb)

}

CI.lower.q1 <- qx[1] - qnorm(1-alpha/2)*sqrt(var(qxb[,1]))

CI.upper.q1 <- qx[1] + qnorm(1-alpha/2)*sqrt(var(qxb[,1]))CI.lower.q2 <- qx[2] - qnorm(1-alpha/2)*sqrt(var(qxb[,2]))

CI.upper.q2 <- qx[2] + qnorm(1-alpha/2)*sqrt(var(qxb[,2]))

CI.lower.mu <- mux - qnorm(1-alpha/2)*sqrt(var(muxb))

CI.upper.mu <- mux + qnorm(1-alpha/2)*sqrt(var(muxb))

hist(Yxs, prob=T)

z<-seq(-6,7,by=0.01)

d<-dnorm(z, mean=0.8 * (x[1] > 0), sd=(1+(x[2] > 0)))

lines(z,d, col="darkred" )

abline(v=q1,col="darkred" )

abline(v=q2, col="darkred" )

abline(v=qx[1], col="darkblue")

abline(v=qx[2], col="darkblue")

abline(v=mu, col="darkred")

abline(v=mux, col="darkblue")

abline(v=CI.lower.q1, col="darkblue", lty=2)

abline(v=CI.upper.q1, col="darkblue", lty=2)

abline(v=CI.lower.q2, col="darkblue", lty=2)

abline(v=CI.upper.q2, col="darkblue", lty=2)

abline(v=CI.lower.mu, col="darkblue", lty=2)

abline(v=CI.upper.mu, col="darkblue", lty=2)

As can be seen from the above code, we essentially have B subtrees that can be used to calculate the measure each time. From these B samples of mean and quantiles, we can then calculate variances and use a normal approximation to obtain (asymptotic) confidence intervals seen in the dashed line in the Figure. Again, all of this can be done despite the missing values in X(!)

Variable Importance

A final important aspect of Random Forests is efficiently calculated variable importance measures. While traditional measures are somewhat ad hoc, for the traditional RF and for DRF, there are now principled measures available, as explained in this medium article. For RF, the Sobol-MDA method reliably identifies the variables most important for conditional expectation estimation, whereas for DRF, the MMD-MDA identifies the variables most important for the estimation of the distribution overall. As discussed in the article, using the idea of projected Random Forests, these measures can be very efficiently implemented. We demonstrate this in the example with a less efficient implementation of the MMD variable importance measure:

## Variable importance for conditional Quantile Estimation## For the conditional quantiles we use a measure that considers the whole distribution,

## i.e. the MMD based measure of DRF.

MMDVimp <- compute_drf_vimp(X=X,Y=Y, print=F)

sort(MMDVimp, decreasing = T)

X2 X1 X8 X6 X3 X10

0.852954299 0.124110913 0.012194176 0.009578300 0.008191663 0.007517931

X9 X7 X5 X4

0.006861688 0.006632175 0.005257195 0.002401974

Here both X_1 and X_2 are correctly identified as being the most relevant variable when trying to estimate the distribution. Remarkably, despite the dependence of X_3 and X_1, the measure correctly quantifies that X_3 is not important for the prediction of the distribution of Y. This is something that the original MDA measure of Random Forests tends to do wrong, as demonstrated in the medium article. Moreover, notice again that the missing values in X are no problem here.

Conclusion

GRF/DRF and also the traditional Random Forest should not be missing in the toolbox of any data scientist. While methods like XGboost can have a better performance in traditional prediction, the many strengths of modern RF-based approaches render them an incredibly versatile tool.

Of course, one should keep in mind that these methods are still fully nonparametric, and a lot of data points are needed for the fit to make sense. This is in particularly true for the uncertainty quantification, which is only valid asymptotically, i.e. for “large” samples.

Literature

[1] Breiman, L. (2001). Random forests. Machine learning, 45(1):5–32.

Appendix: Code

require(drf)

require(Matrix)

require(kernlab)drfCI <- function(X, Y, B, sampling = "binomial", ...) {

n <- dim(X)[1]

# compute point estimator and DRF per halfsample

# weightsb: B times n matrix of weights

DRFlist <- lapply(seq_len(B), function(b) {

# half-sample index

indexb <- if (sampling == "binomial") {

seq_len(n)[as.logical(rbinom(n, size = 1, prob = 0.5))]

} else {

sample(seq_len(n), floor(n / 2), replace = FALSE)

}

## Using normal Bootstrap on the data and refitting DRF

DRFb <-

drfown(X = X[indexb, , drop = F], Y = Y[indexb, , drop = F], ...)

return(list(DRF = DRFb, indices = indexb))

})

return(list(DRFlist = DRFlist, X = X, Y = Y))

}

predictdrf <- function(DRF, newdata, functional = NULL, ...) {

##Predict for testpoints in newdata, with B weights for each point, representing

##uncertainty

ntest <- nrow(x)

n <- nrow(DRF$Y)

weightsb <- lapply(DRF$DRFlist, function(l) {

weightsbfinal <- Matrix(0, nrow = ntest, ncol = n, sparse = TRUE)

weightsbfinal[, l$indices] <- predict(l$DRF, x)$weights

return(weightsbfinal)

})

weightsall <- Reduce("+", weightsb) / length(weightsb)

if (!is.null(functional)) {

stopifnot("Not yet implemented for several x" = ntest == 1)

thetahatb <-

lapply(weightsb, function(w)

functional(weights = w, X = DRF$X, Y = DRF$Y, x = x))

thetahatbforvar <- do.call(rbind, thetahatb)

thetahat <- functional(weights = weightsall, X = DRF$X, Y = DRF$Y, x = x)

thetahat <- matrix(thetahat, nrow = dim(x)[1])

var_est <- if (dim(thetahat)[2] > 1) {

a <- sweep(thetahatbforvar, 2, thetahat, FUN = "-")

crossprod(a, a) / B

} else {

mean((c(thetahatbforvar) - c(thetahat)) ^ 2)

}

return(list(weights = weightsall, thetahat = thetahat, weightsb = weightsb,

var_est = var_est))

} else {

return(list(weights = weightsall, weightsb = weightsb))

}

}

drfown <- function(X, Y,

num.trees = 500,

splitting.rule = "FourierMMD",

num.features = 10,

bandwidth = NULL,

response.scaling = TRUE,

node.scaling = FALSE,

sample.weights = NULL,

sample.fraction = 0.5,

mtry = min(ceiling(sqrt(ncol(X)) + 20), ncol(X)),

min.node.size = 15,

honesty = TRUE,

honesty.fraction = 0.5,

honesty.prune.leaves = TRUE,

alpha = 0.05,

imbalance.penalty = 0,

compute.oob.predictions = TRUE,

num.threads = NULL,

seed = stats::runif(1, 0, .Machine$integer.max),

compute.variable.importance = FALSE) {

# initial checks for X and Y

if (is.data.frame(X)) {

if (is.null(names(X))) {

stop("the regressor should be named if provided under data.frame format.")

}

if (any(apply(X, 2, class) %in% c("factor", "character"))) {

any.factor.or.character <- TRUE

X.mat <- as.matrix(fastDummies::dummy_cols(X, remove_selected_columns = TRUE))

} else {

any.factor.or.character <- FALSE

X.mat <- as.matrix(X)

}

mat.col.names.df <- names(X)

mat.col.names <- colnames(X.mat)

} else {

X.mat <- X

mat.col.names <- NULL

mat.col.names.df <- NULL

any.factor.or.character <- FALSE

}

if (is.data.frame(Y)) {

if (any(apply(Y, 2, class) %in% c("factor", "character"))) {

stop("Y should only contain numeric variables.")

}

Y <- as.matrix(Y)

}

if (is.vector(Y)) {

Y <- matrix(Y,ncol=1)

}

#validate_X(X.mat)

if (inherits(X, "Matrix") && !(inherits(X, "dgCMatrix"))) {

stop("Currently only sparse data of class 'dgCMatrix' is supported.")

}

drf:::validate_sample_weights(sample.weights, X.mat)

#Y <- validate_observations(Y, X)

# set legacy GRF parameters

clusters <- vector(mode = "numeric", length = 0)

samples.per.cluster <- 0

equalize.cluster.weights <- FALSE

ci.group.size <- 1

num.threads <- drf:::validate_num_threads(num.threads)

all.tunable.params <- c("sample.fraction", "mtry", "min.node.size", "honesty.fraction",

"honesty.prune.leaves", "alpha", "imbalance.penalty")

# should we scale or not the data

if (response.scaling) {

Y.transformed <- scale(Y)

} else {

Y.transformed <- Y

}

data <- drf:::create_data_matrices(X.mat, outcome = Y.transformed, sample.weights = sample.weights)

# bandwidth using median heuristic by default

if (is.null(bandwidth)) {

bandwidth <- drf:::medianHeuristic(Y.transformed)

}

args <- list(num.trees = num.trees,

clusters = clusters,

samples.per.cluster = samples.per.cluster,

sample.fraction = sample.fraction,

mtry = mtry,

min.node.size = min.node.size,

honesty = honesty,

honesty.fraction = honesty.fraction,

honesty.prune.leaves = honesty.prune.leaves,

alpha = alpha,

imbalance.penalty = imbalance.penalty,

ci.group.size = ci.group.size,

compute.oob.predictions = compute.oob.predictions,

num.threads = num.threads,

seed = seed,

num_features = num.features,

bandwidth = bandwidth,

node_scaling = ifelse(node.scaling, 1, 0))

if (splitting.rule == "CART") {

##forest <- do.call(gini_train, c(data, args))

forest <- drf:::do.call.rcpp(drf:::gini_train, c(data, args))

##forest <- do.call(gini_train, c(data, args))

} else if (splitting.rule == "FourierMMD") {

forest <- drf:::do.call.rcpp(drf:::fourier_train, c(data, args))

} else {

stop("splitting rule not available.")

}

class(forest) <- c("drf")

forest[["ci.group.size"]] <- ci.group.size

forest[["X.orig"]] <- X.mat

forest[["is.df.X"]] <- is.data.frame(X)

forest[["Y.orig"]] <- Y

forest[["sample.weights"]] <- sample.weights

forest[["clusters"]] <- clusters

forest[["equalize.cluster.weights"]] <- equalize.cluster.weights

forest[["tunable.params"]] <- args[all.tunable.params]

forest[["mat.col.names"]] <- mat.col.names

forest[["mat.col.names.df"]] <- mat.col.names.df

forest[["any.factor.or.character"]] <- any.factor.or.character

if (compute.variable.importance) {

forest[['variable.importance']] <- variableImportance(forest, h = bandwidth)

}

forest

}

#' Variable importance for Distributional Random Forests

#'

#' @param X Matrix with input training data.

#' @param Y Matrix with output training data.

#' @param X_test Matrix with input testing data. If NULL, out-of-bag estimates are used.

#' @param num.trees Number of trees to fit DRF. Default value is 500 trees.

#' @param silent If FALSE, print variable iteration number, otherwise nothing is print. Default is FALSE.

#'

#' @return The list of importance values for all input variables.

#' @export

#'

#' @examples

compute_drf_vimp <- function(X, Y, X_test = NULL, num.trees = 500, silent = FALSE){

# fit initial DRF

bandwidth_Y <- drf:::medianHeuristic(Y)

k_Y <- rbfdot(sigma = bandwidth_Y)

K <- kernelMatrix(k_Y, Y, Y)

DRF <- drfown(X, Y, num.trees = num.trees)

wall <- predict(DRF, X_test)$weights

# compute normalization constant

wbar <- colMeans(wall)

wall_wbar <- sweep(wall, 2, wbar, "-")

I0 <- as.numeric(sum(diag(wall_wbar %*% K %*% t(wall_wbar))))

# compute drf importance dropping variables one by one

I <- sapply(1:ncol(X), function(j) {

if (!silent){print(paste0('Running importance for variable X', j, '...'))}

DRFj <- drfown(X = X[, -j, drop=F], Y = Y, num.trees = num.trees)

DRFpredj <- predict(DRFj, X_test[, -j])

wj <- DRFpredj$weights

Ij <- sum(diag((wj - wall) %*% K %*% t(wj - wall)))/I0

return(Ij)

})

# compute retraining bias

DRF0 <- drfown(X = X, Y = Y, num.trees = num.trees)

DRFpred0 = predict(DRF0, X_test)

w0 <- DRFpred0$weights

vimp0 <- sum(diag((w0 - wall) %*% K %*% t(w0 - wall)))/I0

# compute final importance (remove bias & truncate negative values)

vimp <- sapply(I - vimp0, function(x){max(0,x)})

names(vimp)<-colnames(X)

return(vimp)

}

Be the first to comment